n our SecOps platform, we are currently in the process of moving all our reference lists to data tables since lists are on a deprecation path. We currently have a couple of reference lists “known Malicious hashes” which contains 2 lakhs hashes divided into two lists(1 lakh hashes each). Reference list allow 1 lakhs entries per list. Whereas, Data tables allow only 10,000 entries per table so I have created 20 data tables and somehow moved all of them. I would like to know if there’s anyway we can fix this portion first. This was a time-consuming task to move just 2 lists to 20 tables due to the limitation.

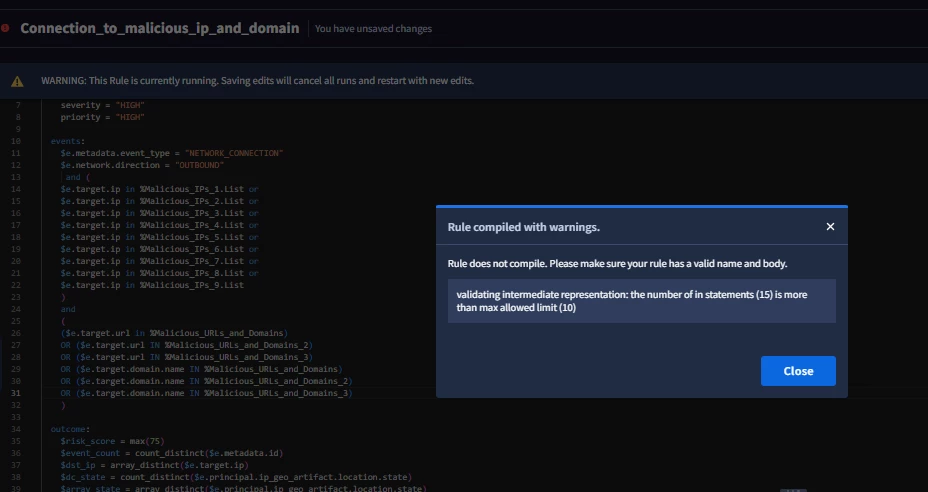

Next up, when I try to use those data tables in a rule, I hit a limitation again “The number of in statements is more than max allowed limit(10). Can you help?

Attached error message