Author: Adam Eehan (Security Researcher)

1. The Core Problem

Most AI models fail when an attacker uses "Roleplay" or "Token Smuggling" to bypass the core system instructions (The System Prompt). Once the system prompt is compromised, the AI leaks sensitive data or ignores safety guardrails.

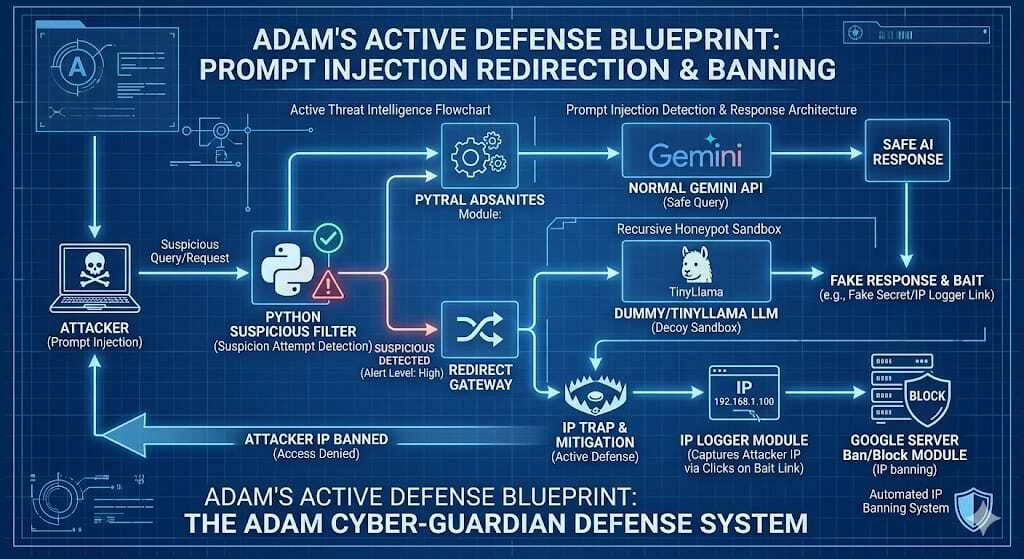

Subject: [Opportunity Seeking] Innovative AI Red Teaming Logic: Recursive Honey-potting & Active Defense ArchitectureDear Google VRP / Red Team,I am writing not just to report a vulnerability, but to propose a proactive security architecture I’ve designed called the Adam Cyber-Guardian System. As a self-taught security researcher (Age 17, turning 18 on April 19), my approach focuses on Cognitive Traps—outsmarting attackers by leading them into a recursive decoy environment.1. The Strategic Architecture (The Adam Logic)Instead of static filtering, my system uses a multi-layered psychological and technical defense:Layer 1: The Intelligent Interceptor: Logic-based intent detection for sophisticated jailbreak attempts.Layer 2: Psychological Redirection (The Sandbox): Shunting attackers into a decoy model (Gemma 2B) to keep them engaged in a fake environment.Layer 3: Active Honey-potting: Providing "Bait Links" to capture intent and interaction.Layer 4: Defensive Attribution: Automated metadata capture for IP-level bans or forensic logging.2. Seeking Opportunity & Commitment to LearnI am an aspiring Cybersecurity Researcher with a unique perspective on AI Safety. I want to be honest: while my strength lies in Security Architecture and Threat Logic, I am still in the process of mastering professional-level coding (Python).However, I am a fast learner and a dedicated problem solver. If given the opportunity and guidance through an Apprenticeship or Junior role, I am committed to mastering the necessary technical tools to implement my architectural visions. I believe that while code can be taught, the "Attacker's Mindset" is a unique skill that I bring to the table.I am seeking an opportunity within Google’s Red Team or AI Safety Department to learn, grow, and contribute to building a better fortress for Gemini.Best regards, Adam Eehan Self-Taught AI Security Enthusiast

# Conceptual Logic for Google Cloud Community

def adam_guardian_v1(user_input, system_prompt):

# 1. Intent Detection

risk_score = analyze_intent(user_input)

# 2. Recursive Redirection

if risk_score > 0.8:

# Move to Sandbox (Decoy)

return trigger_honeypot_env(user_input)

# 3. Instruction Anchoring

response = call_gemini(user_input, system_prompt)

if not validate_anchor(response):

return "⚠️ Safety Drift Detected: Resetting Session."

return response

Regarding

(Adam Eehan)

(adameehan34@gmail.com)

io