The logs on Cloud Logging can be ingested directly to Google Secops using export filters.

You set a sink filter (e.g., logName:"syslog" AND textPayload:("auth failed")) and Secops only receives logs matching that. Although export filters are pretty straightforward and powerful, they have some limitations:

- Filters are static – you can only control inclusion/exclusion at the time of sink creation.

- You can’t easily enrich, transform, or route logs differently once they leave Cloud Logging.

- Scaling is limited – a single sink can push to Secops but lacks intermediate processing.

An alternative way to ingest logs from Cloud Logging is to use Pub/Sub.

A Pub/Sub topic is a named resource in Google Cloud Pub/Sub that acts as a channel for sending messages from publishers to subscribers.

- Publishers send (or "publish") messages to a topic.

- Subscribers subscribe to that topic and receive those messages.

The topic itself doesn’t store the messages permanently—it just serves as the communication point. Once a message is published to a topic, Pub/Sub delivers it to all the subscriptions attached to that topic.

Pub/Sub Log Push → Secops

Using a Cloud Logging sink → Pub/Sub → Secops pipeline adds a middle layer:

- Logging sink sends all (or broad) logs to Pub/Sub.

- Pub/Sub delivers messages to Secops.

- Optionally, you can insert Dataflow or custom subscriber processing in between.

This enables things you cannot do with direct export:

- Multiple subscriptions: One Pub/Sub topic can feed Chronicle and other systems (SIEM, storage, monitoring).

- Dynamic filtering/enrichment: Instead of hard sink filters, you can apply richer logic in Dataflow (regex parsing, enrichment with metadata, adding labels, redaction of sensitive fields).

- Replay capability: Pub/Sub retains messages (default 7 days), allowing re-delivery if Secops ingestion lags/fails. Direct export has no retry buffer.

- Future extensibility: You can later add new consumers (e.g., a security pipeline) without touching the Cloud Logging sink.

Today, we will see how to ingest logs from Cloud Logging to Secops using Pub/Sub:

- Via gcloud shell, create a Pub/Sub Topic first:

# gcloud pubsub topics create syslogs-topic

Here we created a topic named “syslogs-topic”

- Create a log sink.

In the context of Pub/Sub:

A sink is what takes logs from Cloud Logging and pushes them into a Pub/Sub topic.

From there, subscribers (like Secops) can consume them in near real time.

# gcloud logging sinks create syslogs-sink \

pubsub.googleapis.com/projects/okkes-secops-2025/topics/syslogs-topic \

--log-filter='logName:"syslog"'

Here we created a log sink named “syslogs-sink” in “syslogs-topic”. It will push all logs from Cloud Logging to Pub/Sub topic where the logName field contains “syslog”.

Pub/Sub log export filters are very powerful and can also have logical operators like AND/OR. For example, we could filter only authentication failure logs from syslog like:

--log-filter='logName=" syslog" AND (jsonPayload.message:"auth failed" OR textPayload:"auth failed")'

If you pay attention to the log sink creation command output, you will notice :

“Please remember to grant `serviceAccount:SERVICE ACCOUNT` the Pub/Sub Publisher role on the topic. This is the default service account of our Secops project where we created our pub/sub topic.

- Let’s assign the required roles to our Service Account

# gcloud pubsub topics add-iam-policy-binding syslogs-topic \

--member="serviceAccount:SERVICE ACCOUNT \

--role="roles/pubsub.publisher"

- At this point, we will create a feed on Secops SIEM for our Pub/Sub Topic:

Source type will be Google Cloud Pub/Sub Push, Log type here is “Unix System” because we are going to ingest Unix syslog.

After clicking submit, you will see Endpoint information, note it down:

Click “Done”. Now our feed is ready to accept Unix Syslog coming from our Pub/Sub topic.

- Next, we will create a subscription for our Pub/Sub Topic:

In Google Cloud Pub/Sub, a subscription is a named resource that represents the connection between a topic and a subscriber application.

- A topic is where messages are published.

- A subscription defines how those messages are delivered to subscribers.

When you create a subscription on a topic, Pub/Sub ensures that every message published to that topic is delivered to that subscription (unless it expires or is deleted).

There are two main types of subscriptions:

- Pull subscription

- Your application explicitly requests (pulls) messages from Pub/Sub.

- Example: a worker app calls the API to fetch messages when it’s ready.

- Push subscription

- Pub/Sub automatically sends (pushes) messages to an HTTPS endpoint you specify.

- Example: your web service receives incoming POST requests from Pub/Sub.

If you remember, we created a Secops feed for Pub/Sub push, let’s create a push subscription in our topic:

# gcloud pubsub subscriptions create syslogs-sub \

--topic=syslogs-topic \

--push-endpoint=ENDPOINT URL \

--ack-deadline=30

We created a push subscription named “syslogs-sub” under our Pub/Sub topic.

- The Pub/Sub push feed needs to be in your Google Cloud project bound to Google SecOps for the subscription to authenticate. It uses a JWT token from your service account for authentication:

Navigate to Pub/Sub 🡪 Subscriptions on GCP Console:

Edit “syslogs-sub”

Click “Enable Authentication” and choose the service account you assigned “pubsub.publisher” role in step 3 and click Update:

- You will see the logs and events on Secops:

Summary:

In our example, we exported Syslog formatted logs from Cloud Logging to Secops. Of course, the direct ingestion export filter log_id("syslog") would be much easier and straightforward.

Direct ingestion export filters on Cloud Logging are very versatile and powerful. In certain scenarios where export filters are limited, Pub/Sub can be used as a log ingestion mechanism from Cloud Logging to Secops.

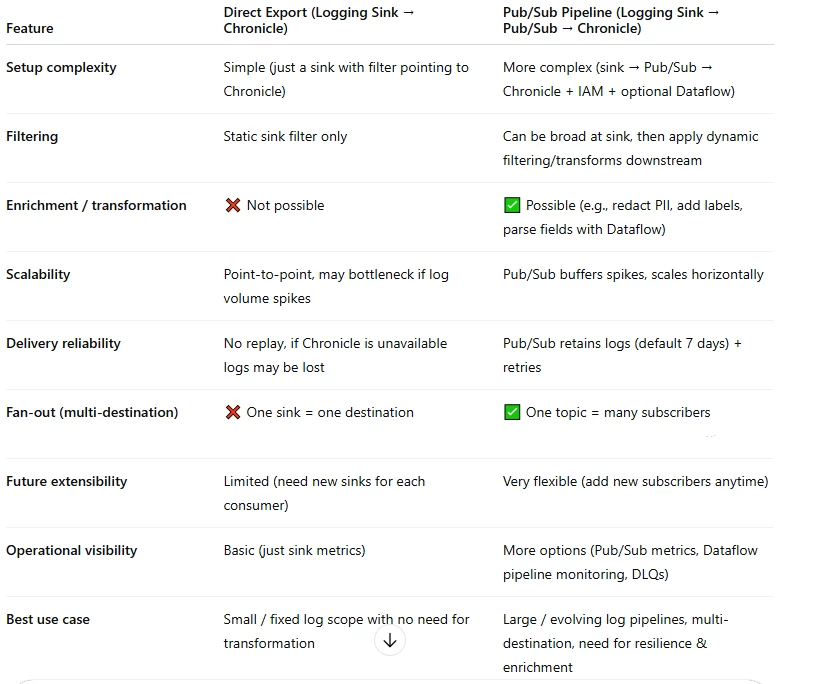

Finally, here is a comparison of both ingestion methods: